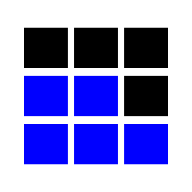

Array

Senior insight

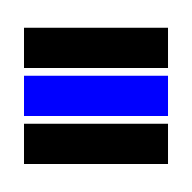

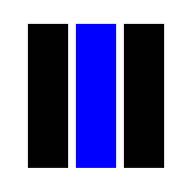

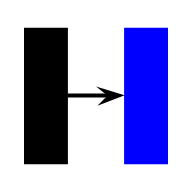

Contiguous memory is the secret. Access is O(1) because the CPU computes `base + i · stride` and prefetches the next cache line — linked lists lose on every traversal for the same Big-O because they thrash the cache. In realtime systems, pre-size the array or use a ring buffer to avoid the O(n) doubling spike that tail-latency charts will show.

Common traps

- Mid-array insert / delete is O(n), even though access is O(1)

- Treating amortized O(1) append as if every single append is O(1) — one in log₂n calls is O(n)

Follow-up questions

- ? When would a linked list actually beat a dynamic array in practice?

- ? How does cache-line size (typically 64 bytes) affect the constant factor?